For more than 250 years, engineers have tried to make machines speak. What began with mechanical vowel tubes and bellows now runs on neural networks that can clone a voice from just a few seconds of audio.

As Dennis Klatt observed in his 1987 review of speech synthesis research, the dream of creating a machine that speaks has been one of the longest-running projects in engineering.

Here is every major turning point in text-to-speech (TTS), from the first artificial vowel to today's conversational AI voices.

TL;DR

- Early TTS was mechanical, then electrical, then rule-based software

- Concatenative and statistical methods dominated the 1990s and 2000s

- Neural TTS (2016 to present) reached near-human quality and enabled voice cloning from short audio samples

1769 to 1791: the first artificial voices

Kratzenstein's vowel tubes

In 1769, Christian Kratzenstein built acoustically shaped tubes that reproduced the five long vowel sounds: A, E, I, O, U. It was one of the earliest documented attempts at artificial speech.

Von Kempelen's speaking machine

Wolfgang von Kempelen spent 20 years refining a device that used a bellows, vibrating reed, and hand-shaped leather tube to produce whole words and short phrases. Published in 1791, it remained among the most advanced speech devices for over a century. The original machine survives today at the Deutsches Museum in Munich.

1939 to 1984: from electronics to desktops

The Voder (Bell Labs, 1939)

Homer Dudley's VODER (Voice Operation DEmonstrater) debuted at the 1939 World's Fair. A trained operator used a keyboard and foot pedals to control electronic circuits that generated speech in real time. Operators needed roughly a year of practice to use it fluently. The Voder proved that intelligible speech could be generated from pure electronics: no recording, no acoustic source.

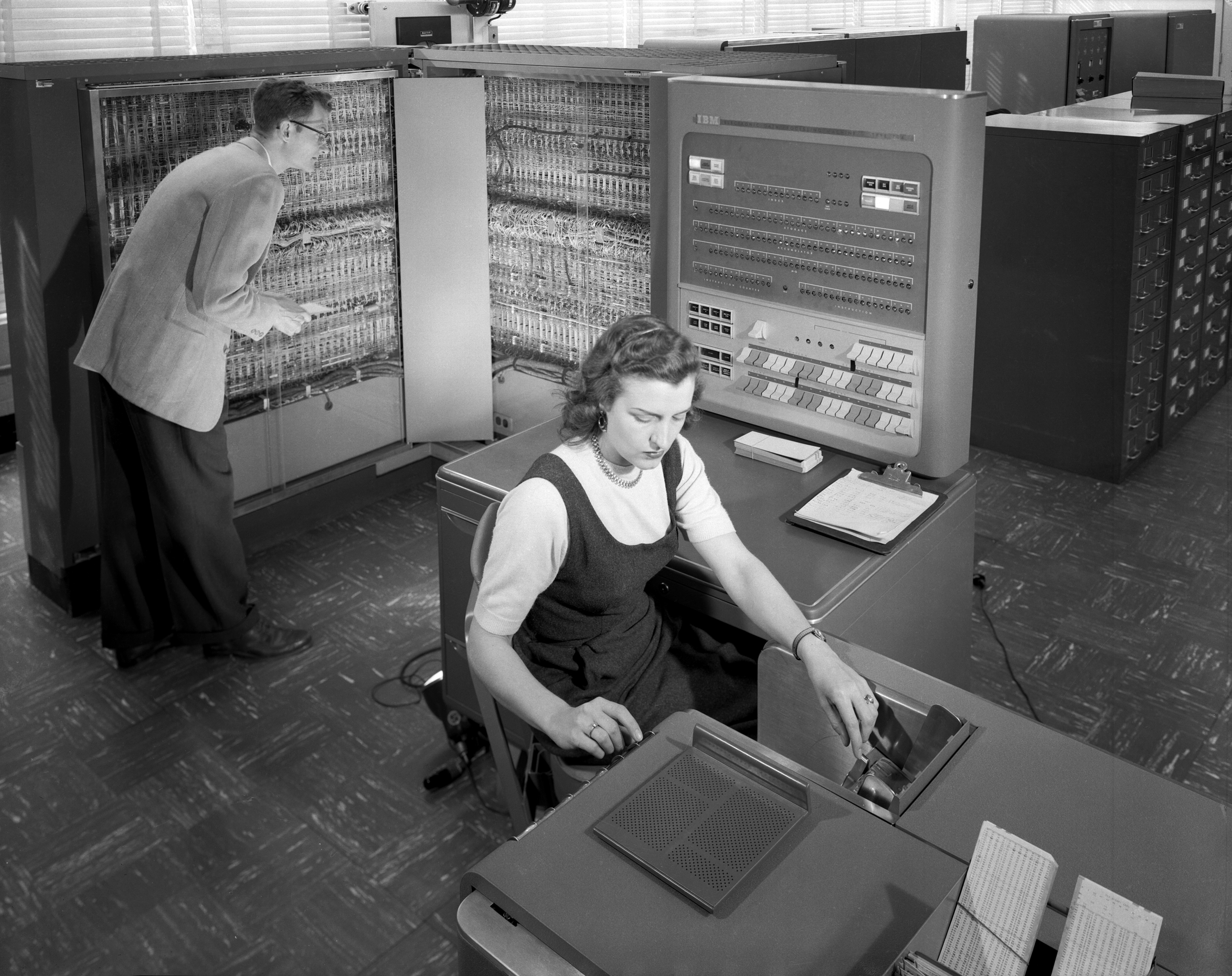

"Daisy Bell" on the IBM 704 (Bell Labs, 1961)

John Larry Kelly Jr. used an IBM 704 computer and a vocoder to synthesize "Daisy Bell (Bicycle Built for Two)," with accompaniment by Max Mathews. It holds the Guinness World Record for the first song performed using computer speech synthesis. Arthur C. Clarke witnessed the demo and later wrote it into HAL 9000's death scene in 2001: A Space Odyssey.

Kurzweil Reading Machine (1976)

Ray Kurzweil combined optical character recognition (OCR) with text-to-speech to build a device that read printed text aloud for blind and visually impaired users. Stevie Wonder was one of the first customers. It was the first commercial product to pair OCR with TTS and proved there was real-world demand for the technology.

DECtalk (1983) and MacinTalk (1984)

Dennis Klatt's formant synthesis research at MIT became the engine behind DECtalk, released by DEC in 1983. It offered multiple voices, including "Perfect Paul," modeled on Klatt's own voice. DECtalk became the gold standard for commercial TTS for over a decade; Stephen Hawking used a system based on the same technology as his voice from the mid-1980s onward.

The original Macintosh shipped with MacinTalk in 1984. At the launch event, Steve Jobs had the computer introduce itself by speaking to the audience. It put TTS on millions of desktops for the first time.

1990 to 2005: concatenative and statistical synthesis

PSOLA (France Telecom, 1990)

Eric Moulines and Francis Charpentier published the PSOLA (Pitch-Synchronous Overlap-Add) algorithm in Speech Communication (full paper), which modified the pitch and duration of recorded speech segments without significant quality loss. PSOLA unlocked practical concatenative synthesis: instead of generating speech from rules, systems could splice and adjust recordings of real human speech.

Festival (University of Edinburgh, 1995)

Alan Black and Paul Taylor released Festival, an open-source, multi-lingual TTS framework. It became the dominant research platform worldwide and democratized access to speech synthesis tools.

AT&T Natural Voices (2000)

AT&T Labs released Natural Voices, a unit-selection system that picked from thousands of recorded speech segments to construct each utterance. Voices like "Crystal" and "Mike" represented the peak of concatenative TTS quality and saw wide deployment in IVR and telephony systems.

| Approach | How it works | Trade-off |

|---|---|---|

| Formant synthesis | Rules control electronic resonance parameters | Small footprint; robotic sound |

| Concatenative (unit selection) | Selects and splices recorded speech segments | Natural sound; large database, inflexible |

| Statistical parametric (HMM) | Trained models predict speech parameters | Tiny footprint, adaptable; "buzzy" vocoder quality |

HTS and HMM-based synthesis (2005)

Keiichi Tokuda, Heiga Zen, and colleagues built HTS, a system that trained Hidden Markov Models to predict spectral and pitch parameters from text (SSW6 paper). Instead of splicing recordings, it generated speech from learned statistical patterns. The result had a smaller footprint than concatenative systems and could adapt to new voices with less data, but vocoder-generated audio sounded muffled compared to real recordings.

2011 to 2019: voice assistants and the neural revolution

Siri (Apple, 2011)

Siri launched on the iPhone 4S in October 2011, making voice interaction with a mobile device mainstream. It triggered an industry-wide arms race in voice assistants and made TTS quality a consumer differentiator.

Amazon Alexa and Echo (2014)

Amazon's Echo, powered by Alexa, launched in late 2014. Its voice was built on technology from IVONA, a Polish TTS company Amazon acquired in 2012. The Echo created the smart speaker category and put TTS in millions of homes.

WaveNet (DeepMind, 2016)

DeepMind's WaveNet generated raw audio waveforms sample by sample using an autoregressive neural network (paper). Listeners rated it significantly closer to natural speech than any prior system. The catch: generating one second of audio initially took minutes of compute.

Tacotron 2 (Google, 2017 to 2018)

Google's Tacotron 2 combined a sequence-to-sequence spectrogram predictor with a WaveNet vocoder (paper). In controlled tests, it achieved MOS (Mean Opinion Score) ratings virtually indistinguishable from real human recordings on single-speaker read speech. This was the architecture that demonstrated neural TTS could achieve near-human quality in controlled listening tests.

Cloud neural TTS APIs (2018 to 2019)

Google Cloud TTS launched with WaveNet voices in 2018. Microsoft Azure and Amazon Polly followed with their own neural engines in 2019. Neural TTS went from research breakthrough to a commodity any developer could access through an API call.

2022 to 2025: voice cloning and conversational AI

ElevenLabs (2022)

Founded by Piotr Dabkowski and Mati Staniszewski, ElevenLabs made high-quality voice cloning accessible to consumers through a simple web interface. It also ignited a public debate about deepfake audio and the safeguards needed around synthetic voices.

VALL-E (Microsoft, 2023)

Microsoft Research reframed TTS as a language modeling problem. VALL-E treated speech as discrete audio tokens (from Meta's EnCodec codec) and predicted them autoregressively. Given just three seconds of reference audio, it could synthesize speech in that voice. This was a paradigm shift: one model, any voice, no fine-tuning.

OpenAI TTS API (2023)

OpenAI released its TTS API in November 2023 with six built-in voices and fast streaming support. Its developer reach brought neural TTS to an even broader audience.

Any sufficiently advanced technology is indistinguishable from magic

F5-TTS and Kokoro (2024)

F5-TTS, from Shanghai Jiao Tong University, used flow matching to eliminate the need for phoneme alignment entirely (paper). Kokoro, from Hexgrad, achieved near state-of-the-art quality with just 82 million parameters: small enough to run on-device. Both were open source and Apache-licensed.

Sesame CSM (2025)

Sesame released CSM (Conversational Speech Model), designed for multi-turn dialogue (model card). Unlike prior systems that synthesized one utterance at a time, CSM adapted prosody and tone across an entire conversation.

Multimodal LLMs absorb TTS

OpenAI's GPT-4o (2024) and Google's Gemini 2.0 demonstrated native voice input and output within the same model. TTS is no longer a separate module; it is becoming a built-in capability of large language models.

On the regulatory side, the FCC ruled AI-generated voices in robocalls illegal in February 2024. The EU AI Act introduced transparency and disclosure obligations for synthetic voice content. SAG-AFTRA's 2023 strike included protections against AI voice replication. Industry responses include inaudible watermarking and consent verification for voice cloning.

Get started with TTS

Telnyx runs text-to-speech on the same carrier network where voice calls terminate. That co-located architecture eliminates the inter-provider hops that add latency in multi-vendor pipelines.

To get started with TTS on Telnyx:

- Create a Telnyx account and generate an API key

- Choose a voice from the TTS API voice library

- Send text to the API and receive synthesized audio in real time

- Integrate with Telnyx Voice API for live call playback

- Monitor quality and latency from a single dashboard

Frequently asked questions

When was text-to-speech invented?

The earliest speech synthesis device dates to 1769, when Christian Kratzenstein built tubes that reproduced vowel sounds. The first full TTS system that converted arbitrary text to speech appeared in the late 1960s in Japan.

What was the first TTS software?

MITalk, developed at MIT in the 1970s by Jonathan Allen and Dennis Klatt, was one of the first comprehensive text-to-speech software systems for English. DECtalk (1983) was the first widely successful commercial TTS product.

How does modern TTS work?

Most modern TTS systems use neural networks. A text encoder converts input text into an intermediate representation (typically a mel spectrogram), and a neural vocoder converts that spectrogram into an audio waveform. Some newer systems generate audio tokens directly using language model architectures.

What is neural TTS?

Neural TTS refers to text-to-speech systems powered by deep neural networks rather than rule-based or statistical methods. DeepMind's WaveNet (2016) was the first major neural TTS system. Google's Tacotron 2 (2017) was the first to achieve near-human quality in controlled listener tests.

Can AI clone any voice?

Modern systems like VALL-E can replicate a voice from as little as three seconds of audio. However, quality improves with more reference material. Ethical and legal frameworks around voice cloning are evolving rapidly, with regulations like the EU AI Act and FCC rulings placing restrictions on unauthorized use.